Seedance 2.0 on LibTV: A Solution for Card Pull Anxiety

The much-anticipated public beta of Seedance 2.0 has finally arrived for AI video creators across the internet. Creators are relieved that they no longer have to wait six hours in line to access the platform. This shift marks a significant structural change in the AI video landscape.

With the closure of Sora and the rise of Seedance 2.0, creators are now seeking more effective, cost-efficient, and seamless video tools. This presents a critical opportunity for players in the AI video sector.

An AI industry analyst noted, “In the current landscape, whoever can access the full version of Seedance 2.0 without waiting, while delivering good results and stability, will have a competitive advantage.”

Among the various AI video platforms, LibTV, under LiblibAI, has shown a remarkable response to this change. It was the first third-party product to integrate with the Seedance 2.0 series, having already conducted large-scale testing of model capabilities two weeks prior under the StarVideo format. This indicates a deeper collaboration between LibTV and ByteDance than initially expected.

1. LibTV + Seedance 2.0: The Arrival of the “AI All-Round Director”

In today’s AI video landscape, the competition centers around one key question: Who can efficiently and reliably translate creative ideas into high-quality works?

Seedance 2.0, which gained massive attention during the Spring Festival, showcased the potential for high consistency in characters and scenes, dynamic stability, and effective scene transitions, all of which enhance the usability of video materials. However, issues like model downgrades and queue delays have negatively impacted user experience.

With LibTV, users need not worry about these issues. We tested LibTV with a challenging sports scene that required complex camera angles and movement control. Using simple prompts, LibTV delivered a high-energy sports montage featuring gymnastics, boxing, swimming, and parkour in under three minutes, showcasing its speed advantage.

In contrast, submitting the same prompts to the official platform resulted in the familiar queue delays.

Not only was LibTV faster, but it also avoided the dreaded card pulls, presenting smooth and detailed visuals of gymnasts, boxers, and more, complete with a mix of medium and close-up shots, and even an unexpected transition.

We conducted rapid tests on three typical scenarios using Seedance 2.0 on LibTV: AI commercial ads, AI dramas, and trending TikTok videos. The commercial ad, focusing on product details and multi-angle displays, was efficiently generated using over 20 features, resulting in a high-quality 10-second perfume ad that captured character expressions and usage scenarios beautifully.

Compared to official and other third-party platforms, LibTV emerged as a “versatile director” capable of realizing your ideas with speed, stability, and superior results.

The LibTV team has undertaken significant behind-the-scenes work. They have optimized the entire process from model invocation logic to generation parameters, which explains why LibTV achieves higher output rates and better detail presentation with the same prompts.

For AI drama teams, LibTV’s advantages are even more pronounced. Current AI drama teams can be categorized into two types: those focusing on efficiency and those on quality. LibTV’s 2-3 minute turnaround for dramas is ideal for high-volume production, while its storytelling and aesthetic capabilities appeal to creators of high-quality dramas.

In one of our 15-second tests, the narrative quality and richness were surprisingly impressive, showcasing LibTV’s directorial approach—breaking down the protagonist’s spellcasting into a series of compact narratives, from close-ups to dramatic environmental changes, culminating in a cohesive visual flow.

We also replicated a popular TikTok-style pet video featuring an orange cat’s daily life, demonstrating LibTV’s ease of use.

During our tests, we discovered that LibTV has updated several features that directly address creators’ pain points—the subject library and image editing capabilities.

The new mirroring and rotation features enhance efficiency for scene breakdowns, allowing creators to quickly generate matching storyboards without needing to create new images.

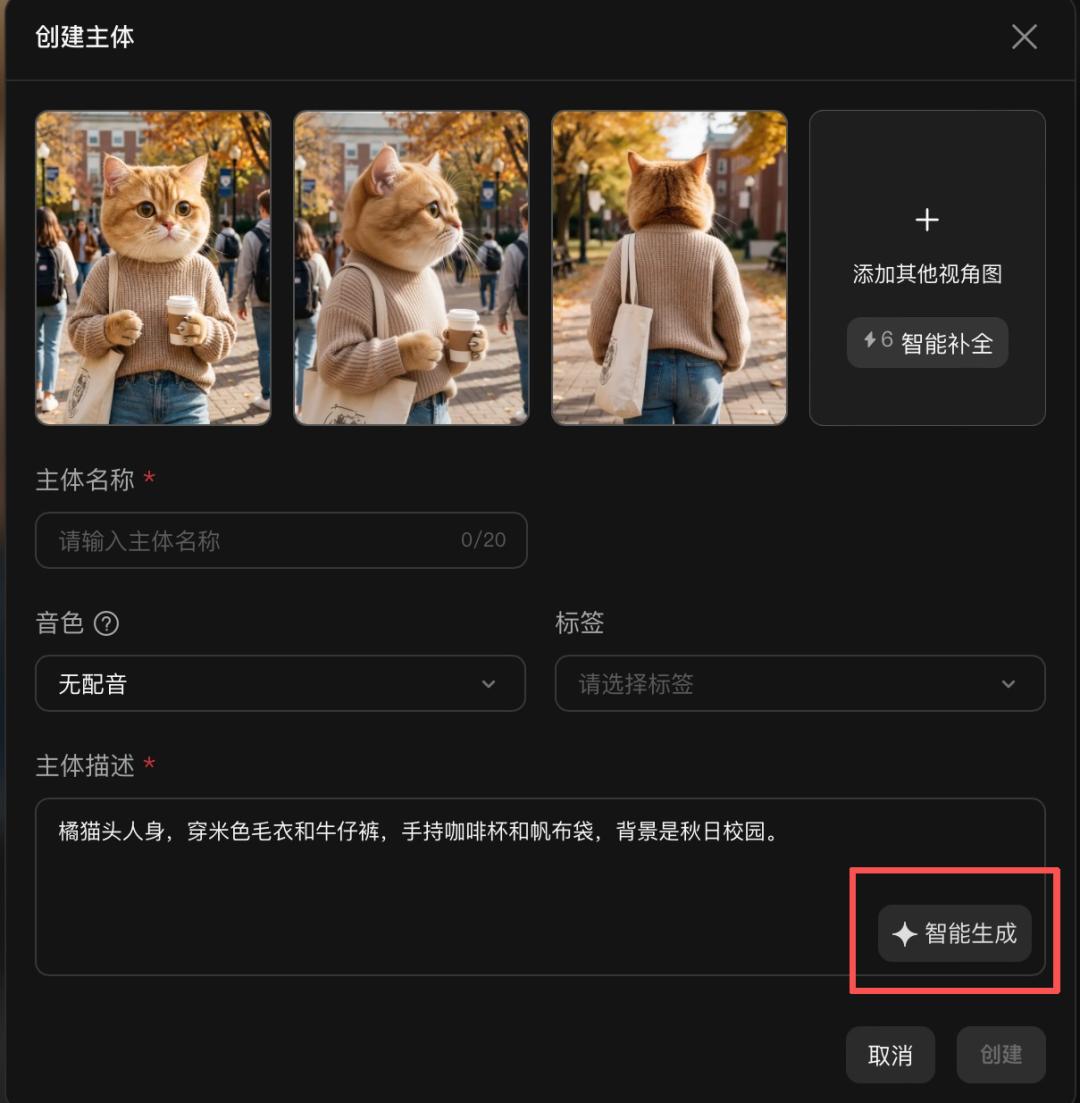

The subject library now supports audio uploads in video format, eliminating the need to extract audio separately. Character voice tones can be selected directly from historical assets, and descriptions are generated automatically without manual editing.

These small feature updates follow a common logic: greater efficiency, less effort, and better reusability.

2. Why is LibTV the “Dark Horse”?

Before LibTV integrated with Seedance 2.0, many creators in the AI short film and movie space had already begun to notice this “dark horse” in the AI video arena. LiblibAI, the company behind LibTV, is relatively young, having been established for less than three years, but it has developed rapidly. In October of last year, it completed the largest funding round in China’s AI application sector to date, and on the first day of LibTV’s launch, it surpassed 100,000 visits.

In the current AI video landscape, entrepreneurs generally fall into two categories: those developing underlying models that provide foundational generation capabilities, and those creating tools that interface with these models. However, many tools lack a deep understanding of creators’ needs and the ability to control the models.

LibTV belongs to the latter category but has avoided this dilemma by positioning itself uniquely from the start: as a video creation system designed for both human creators and agents.

Thus, LibTV has a clear understanding of the fundamental questions surrounding AI video: Who is it for, and where is it headed?

One end is focused on human creators. It connects five basic modal nodes—text, images, video, audio, and scripts—into a controllable workflow, allowing creators to visualize their projects within a defined space.

It also functions as an industrial production line. For AI drama creators, aesthetic styles, fixed shots, and character IP assets can be packaged into a production line, enabling efficient production of new stories or scenes with minimal adjustments.

The other end caters to agents. LibTV packages its capabilities into Skills, allowing coding agents and others to install LibTV skills and access top models like Seedance 2.0. Now, with simple natural language commands, agents can generate five-minute short films or create new music videos from a single audio file.

In essence, LibTV’s primary mission is to build a “scaffold” for the two most critical subjects in video creation: creators and agents.

From an industry perspective, many AI video creators still find themselves trapped in the unpredictable outcomes of card pulls, facing high costs and the anxiety of elusive viral logic. Has the barrier to AI video creation truly been lowered? It seems not.

LibTV has made a promising start. With a no-wait experience, simplified access, continuously iterating product capabilities, and generous discounts, it has spread advanced model capabilities, lowered the barriers to AI video creation, and allowed creators to focus on content creativity.

Currently, LibTV offers membership that includes free access to Seedance 2.0 and Kling, allowing users to generate up to 180 videos. For heavy users, such as super creators and short drama companies, LibTV’s cost-effectiveness is significant, with packages like Kling o3+3.0, libnano Pro, and libnano 2 being 30% cheaper than the original models.

Despite the rapid expansion of AI video, according to Grand View Research, the global AI video market penetration is only about 1.4% as of 2025, indicating vast potential for growth.

If 2025 is seen as the breakout year for AI video generation in China, then 2026, with the evolution of large video models and the iteration of AI video tools, is poised to be a year of accelerated penetration and implementation of AI video technology.

This future may already be unfolding on the limitless canvas of LibTV.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.